Software-defined networking (SDN) is getting a lot of publicity as the latest technology for intelligent networking. With SDN poised to have a major impact on the future of networking, there are specific measures that can be taken today to get a better connection to the cloud.

You can achieve a better connection to the cloud today – you’ll just need to re-imagine three critical areas – network performance, capacity management and the impact of IT on business operations.

Network Performance

Network performance is a critical component of cloud connectivity – after all, cloud services are only as good as the network that supports them. Initial enterprise cloud deployments for software applications have typically relied on Internet VPN or IP/MPLS connections. These Software-as-a-Service (SaaS) cloud applications cause relatively low stress on the network because only a very small amount of information is being transferred over it. Therefore, today’s VPN/MPLS services meet most requirements for running applications in the cloud data center.

Greater network performance has traditionally meant “throwing bandwidth” at the problem. As a result, it is common for enterprise IT professionals to think of this network as a cost center and therefore they tend to take a “lowest cost, minimal application performance” approach to procurements in the wide area network (WAN).

For example, an organization might start off with two T1 circuits for 3 Mbps bandwidth and can typically scale to around 10 Mbps with that technology following a fairly linear cost curve. However, to expand further, the organization faces a decision point on whether to continue taking a tactical approach to move into the next stage of fixed circuit technology or instead perhaps look into a more strategic technology like Carrier Ethernet services that can easily scale from 10 Mbps to 10 Gbps while also offering much better cost points.

A cost center mindset may tactically reduce operational costs in the short term, but can end up causing big issues in the long term. Low cost, minimal performance technology is not typically equipped to enable the network flexibility and manageability required to support growth. And the network needs massive flexibility for strategic operations if enterprise IT is to expand its use of cloud computing services beyond simple SaaS deployments to include: Platform- and Infrastructure-as-a-Service (PaaS, IaaS) cloud computing, active/active data center synchronization, inter-data center resource workload orchestration and other data-intensive workload applications.

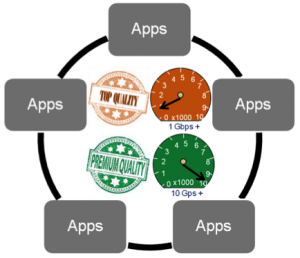

Figure 1 demonstrates what network performance could look like if IT can enable a dynamic allocation of network resources driven by the application. For example, active/active data center designs are changing the game from “recovery” to “always-available.” Any new data created from production workloads is instantaneously transferred to a second location, so failover can be immediate with no data loss and continuous availability is assured. These designs need dynamic network flexibility between the data centers to maintain performance if one site experiences heavier than normal workloads.

Inter-data center applications tend to be large and steady data flows, unlike typical bursty Internet traffic. As a result, a big increase in a steady data flow would have an immediate impact on performance if the network could not also expand. For instance, a steady state backup of changed data may only need a 1G network, but occasionally IT may need to rapidly expand it to a 10G level for a short duration, restoration operation of an entire storage array.

The network will need to be agile enough to enable programmatic assignment of different levels of performance to match different application requirements over a virtualized network infrastructure.

But when we talk about “performance,” we need to think beyond “I’ll just add more bandwidth” as discussed above to achieve a performance-on-demand mindset. For example, a broader measuring stick than raw bandwidth is effective application performance for inter-data center steady data flows such as actual throughput performance. Throughput performance can be negatively impacted by variable network latency due to changing routing paths, congestion and other network deviations.

A performance-on-demand environment could include policy-based application interfaces into the network that selects the optimal performance network path by bandwidth, latency, cost, security, or other variables.

The ability to prioritize network access by a schedule is also a consideration. For example, prime time requirements might cost more than off peak network time. Or maybe a premium encrypted network service could be made available for more sensitive workloads.

The ability to prioritize network access by a schedule is also a consideration. For example, prime time requirements might cost more than off peak network time. Or maybe a premium encrypted network service could be made available for more sensitive workloads.

Capacity Management

Capacity management is the second criterion that businesses must re-imagine to properly reap the cloud’s benefits. By capacity, we mean the data center infrastructure resources to run the workloads. We are all familiar with the lengthy process in most organizations to justify, get approval and finally purchase and deploy capital IT equipment, such as a server or storage array. Sometimes additional delays are caused by the need to add headcount resources and training for provisioning, managing and administrating the equipment. In addition to the acquisition time, often the time to write off the capital expense can mean a multi-year commitment to this fixed capacity. Typically, manual resource management is very labor intensive and slow to react to change.

Updates to the WAN can similarly require a long time to install and can carry a multi-year contract lock-in.

A result of this inflexible capacity management process is that often applications are rigidly assigned a fixed network capacity based on the initial installation with little capability to manage change over time. New requests are often handled in the most tactical, expeditious manner rather than with a well thought out strategic plan.

In order to be more successful, businesses must build/provision the WAN with capacity management flexibility to provide on-demand performance for all of its data center infrastructure needs — servers, storage, and the network. Scalable and on-demand capacity for server and storage resources is already built into the cloud computing model. Whether public or hybrid, cloud providers offer almost unlimited server and storage infrastructure services with very quick turn-up.

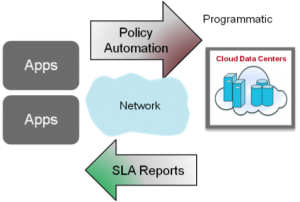

What’s also needed is application driven network capacity, or Network-as-a-Service (NaaS), that is based on pre-determined policy guidelines. Automation can be designed into the process so that the application’s requirements trigger the necessary network connectivity resources when needed and then relinquish resources to the pool when finished, ensuring the most efficient application performance with the lowest operational expense. This programmatic interface into network resources is a fundamental SDN tenant as formerly fixed resources are now turned into agile and efficient tools that facilitate innovation.

Impact of IT on Business Operations

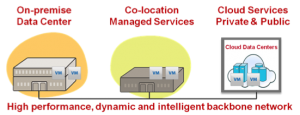

It is now becoming clear that the cloud will be viewed as more than just a remote data center running software applications and infrastructure services. The emerging model includes cloud data centers, and the capacity they provide, as a seamless extension of enterprise-owned data center capacity, plus capacity rented from any multi-tenant data center facilities.

Figure 3 shows this emerging facilities model of some capacity that is owned by the enterprise, some equipment that is housed in a multi-tenant data center rather than building more capacity, and cloud resources where IT does not own anything, but can use infrastructure on a pay-as-you-go basis. This model enables IT to access resources that are more agile, innovative and cost effective, whether located in their own data centers, using their equipment in a co-location facility, or dynamically accessing a cloud data center.

Figure 3 shows this emerging facilities model of some capacity that is owned by the enterprise, some equipment that is housed in a multi-tenant data center rather than building more capacity, and cloud resources where IT does not own anything, but can use infrastructure on a pay-as-you-go basis. This model enables IT to access resources that are more agile, innovative and cost effective, whether located in their own data centers, using their equipment in a co-location facility, or dynamically accessing a cloud data center.

Workloads reaching Terabyte capacities will need to be balanced and shifted between all of these data centers for optimal deployment or other requirements like disaster avoidance. This will require enterprises to adopt a strategic network approach to ensure business performance, one that mandates moving workloads in a timeframe required by the business, not a timeframe limited by the network.

The bottom line is that IT will be freed from spending most of their time on legacy applications and inefficient response to ad hoc requests, to having a strategy and architecture that enables them to focus on providing the right applications in the best location to drive improved business results.

Summary

While too early to tell the eventual impact of the intelligent network on the market, Amazon Web Services (AWS) Direct Connect service using several carrier partnerships, Time Warner Telecom’s on-demand service, and a new SDN- based offering from NTT, all show there is a market for similar on-demand network services.

Whether built with SDN or other technologies, an intelligent network can transform a facilities-only architecture into a fluid workload orchestration workflow system, and a scalable and intelligent network can offer performance-on-demand for assigning network quality and bandwidth per application.

This intelligent network is the key ingredient to enable enterprises to inter-connect data centers with application-driven programmability, enhanced performance and at the optimal cost.

Jim is a Product Line Director working in Ciena’s Industry Marketing segment. He is responsible for developing and communicating solutions and the business value for Ciena’s enterprise data center networking and cloud networking opportunities. Prior to joining Ciena in 2008 Jim held roles in business development and product management for several high technology storage and networking companies in Minneapolis.

Jim holds an MBA from the University of St. Thomas and a BA from the University of Notre Dame. He recently served on the Commission on the Leadership Opportunity in US Deployment of the Cloud (CLOUD2).