Electricity providers will soon be up to many challenges as consumers learn how to use “smart meters” and how to upgrade their utility set-up. They need to be prepared for the influx of information more than what they are prepared to handle.

Electricity providers will soon be up to many challenges as consumers learn how to use “smart meters” and how to upgrade their utility set-up. They need to be prepared for the influx of information more than what they are prepared to handle.

Managers at utility companies need to get on their feet and figure out why a standard huge electric utility is sprawling the network with so much energy only to find out that they contain a very small amount of data. This problem shows a poor visibility of the entire electrical grid. There may be peculiar situations when power shortages provide no trace from the monitoring system; instead they only get informed when calls from the customers reach them complaining that the neighborhood’s power is shut down.

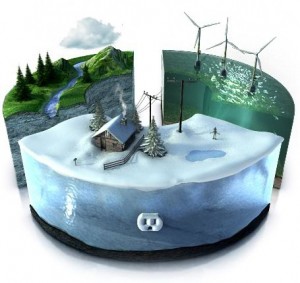

The scarcity of real-time data to customers has called the attention of consumer provider companies to develop a software called the “smart grid” that will address this problem. This software will provide users a more detailed information history of their power consumption. Allowing them to see data on their existing power supply as compared to their electricity demand; then it will give them the control in managing electricity use efficiently. This software will literally permit consumers to assign strategically their electricity usage during peak and off-peak hours to prevent costly power plants to activate more during max out time.

This move in technology will definitely create an IT challenge for utility provider because consumers demand it. This clamor will require them to upgrade their IT infrastructure because not doing so will make them unprepared for an onslaught of huge data information. This scenario will make a lot of service providers unprepared to handle the system which is why it has to be addressed before it can be implemented in a widespread basis. Donald Kintner, Jr. spokesperson for utility-sponsored Electrical Power Research Institute said that smart grid is, “in its infancy.”

Smart-grid developer, Jeff Taft of Cisco Networking Systems said that, “What energy companies are about to experience isn’t simply a doubling or tripling of the amount of data they will be getting.” He also added, “Instead, it’s going to be an increase of multiple orders of magnitude. The industry knows this and is slowly making the transition. But energy is one of those areas where you can’t just rip everything out and start all over.”

Another problem identified with the utility business IT computer systems inability to handle big data could be caused by their central monitoring operations platform that are running on a mix of old computer systems. And these computer systems are not even communicating with each other.

Glenn Booth, head of the marketing at Green Energy that develops the grids management software said, “I’ve been in control rooms where operators need to sit in chairs and swivel between six different monitors to keep everything running,” He also added, “We’re talking about our national power grid here, and that’s just not the way we should be running things.”

For the end user however, it is rather simple to use a “smart meter” because the equipment will automatically generate real-time data by the second on the power usage of a household. The problem is that utility companies do not have the software and the computer system yet to transmit this data. And for those who already upgraded and installed the smart meters they are able to load the data only once a day because they are avoiding being overwhelmed with data storage that they cannot manage.

In California the primary utility provider, Pacific Gas and Electric Company has installed smart meters to 80% of their consumers and expects the remaining homes to have it by next year. Greg Snapper, the spokesperson for PG&E stated that for this system to be fully operational they will need, “complex event-processing engines” which up to this time is still in its development stage.

Related Research from the CloudTimes Research Library

![]() Beyond the Data Warehouse: A Unified Information Store for Data and Content

Beyond the Data Warehouse: A Unified Information Store for Data and Content

With the explosion of unstructured content, the data warehouse is under siege. In this paper, Dr. Barry Devlin discusses data and content as two ends of a continuum, and explores the depth of integration required for meaningful business value. He explains how a unified information store can provide the platform for deeper insight and examination by bringing together data and content from diverse sources, without disrupting the integrity of the information as it is stored.

See also our Review on Big Data in a Special Feature Section.

UTILITY MISREPRESENTATIONS OF WIRELESS SMART METERS.

In the U.S. & UK and other countries where Wireless

smart meters are being installed, energy use is NOT decreasing, customer

UTILITY BILLS ARE INCREASING, there are additional PROBLEMS & COSTS

incurred from increased SECURITY & HACKING PROBLEMS and the Wireless meters

are creating ELECTRICAL INTERFERENCE PROBLEMS.

The utility information generated by Wireless smart meters is NOT real-time and it

does NOT assist customers to use less energy or lower their utility bills. The

information only assists the Utility Company to bill customers and shut off

their power remotely.

Wireless smart meters are NOT mandated by the US Federal

Energy Program, as California’s PG$E pretends.

The Utility companies are salivating over eliminating the

jobs of the full-time-with-benefit meter reader employees and replacing them

with phone operators in India and the Philippines who read scripts to customers

over the phone for $4 per day with NO Benefits.

42 Cities & Counties in California have taken positions

AGAINST Wireless smart meters and 13 have passed Ordinances prohibiting the

Wireless meters.

The monetary transfers from customers to utility companies

are huge, the problems are real and severe, but the advertised benefits are NOT

occurring.

1. WIRELESS SMART METERS – 100 TIMES MORE RADIATION THAN

CELL PHONES.

Video Interview: Nuclear Scientist, Daniel Hirsch, (5

minutes: 38 seconds).

http://stopsmartmeters.org/2011/04/20/daniel-hirsch-on-ccsts-fuzzy-math/

2. WIRELESS SMART METERS – CANCER, NERVOUS SYSTEM DAMAGE,

ADVERSE REPRODUCTION AFFECTS.

Video Interview: Dr. Carpenter, New York Public Health

Department, Dean of Public Health, (2 minutes: 23 seconds).

http://emfsafetynetwork.org/?p=3946

3. THE KAROLINSKA INSTITUTE IN STOCKHOLM (the University

that gives the Nobel Prizes) ISSUES GLOBAL HEALTH WARNING AGAINST WIRELESS

SMART METERS.

2-page Press Release:

http://www.scribd.com/doc/48148346/Karolinska-Institute-Press-Release